Traditional robot control methods such as switches, buttons, and joysticks often require physical effort and limit natural human interaction. To overcome these limitations, gesture-based control provides a more intuitive, flexible, and human-friendly interface.

As part of Creative Wheeled Robotic Projects, the Gesture Controlled Robot enables users to control the movement of a wheeled robot using simple hand gestures. These gestures are captured using sensors such as accelerometers or flex sensors and processed by a microcontroller to determine direction and speed.

Based on the detected gestures, the robot can move forward, backward, left, right, or stop, making the interaction more natural and responsive compared to traditional control systems.

In this project :

- MPU6050 sensor detects hand tilt and motion.

- Microcontroller processes sensor data.

- Robot motors move according to detected gestures.

Key Features :

Natural hand movement–based control

High accuracy using accelerometer & gyroscope

Wireless control (using RF/Bluetooth, optional)

Ideal for assistive and robotic applications

This project demonstrates the integration of motion sensing and robotics.

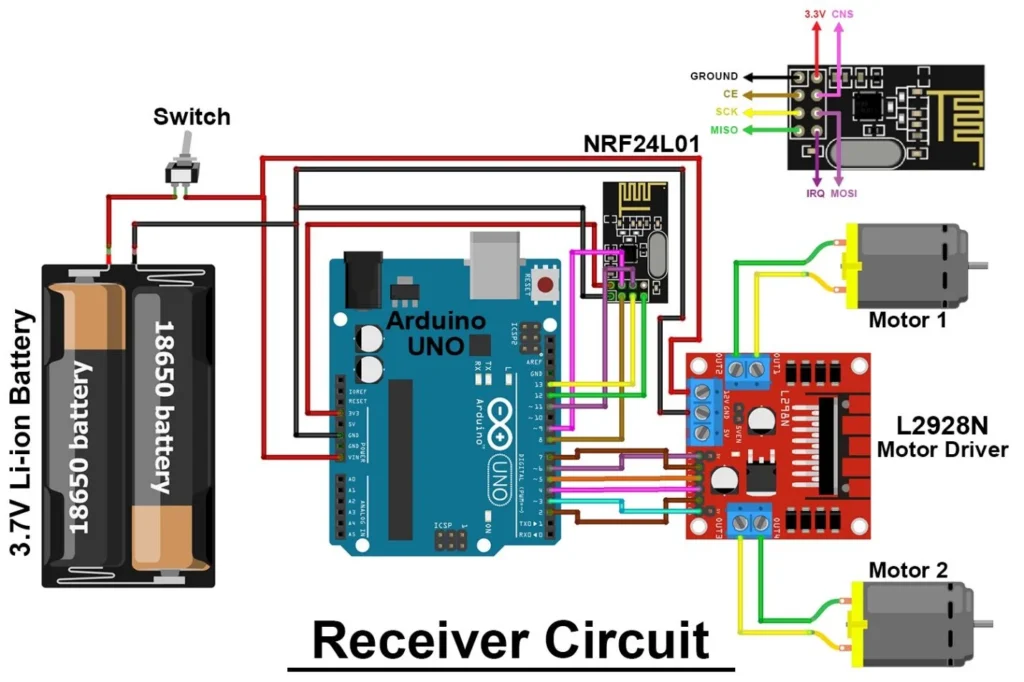

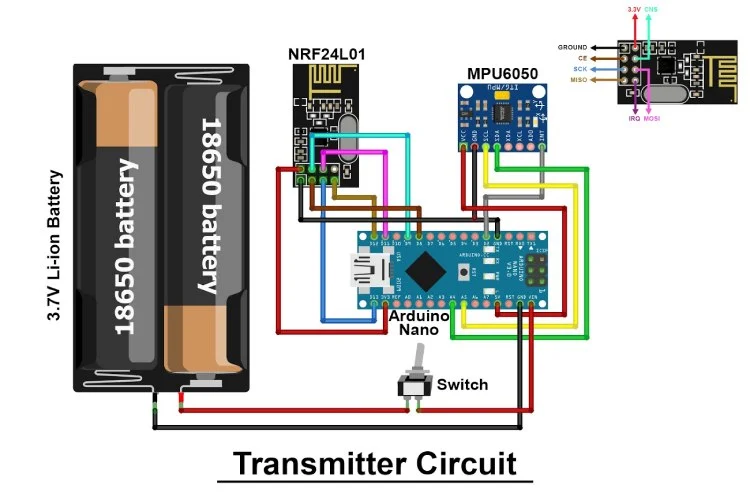

Connection Description (Wiring Map)

Main Components :

- Microcontroller: Arduino Uno / Nano

- MPU6050 Sensor Module

- Motor Driver: L298N / L293D

- DC Motors & Chassis

- Wireless Module: Bluetooth HC-05 / RF (Optional)

- Power Supply: Battery pack

Wiring Summary

MPU6050 Connections (I2C Communication) :

MPU6050 Pin | Arduino Pin | Description |

VCC | 5V | Power supply |

GND | GND | Ground |

SDA | A4 | I2C data |

SCL | A5 | I2C clock |

Motor Driver Connections :

Motor Driver Pin | Arduino Pin | Description |

IN1 | D4 | Left motor control |

IN2 | D5 | Left motor control |

IN3 | D6 | Right motor control |

IN4 | D7 | Right motor control |

ENA/ENB | PWM / Jumper | Speed control |

Working Principle :

MPU6050 senses hand tilt in X and Y directions.

Arduino reads accelerometer and gyroscope values via I2C.

Gesture mapping:

Tilt forward → Robot moves forward

Tilt backward → Robot moves backward

Tilt left → Robot turns left

Tilt right → Robot turns right

Flat position → Robot stops

Arduino sends commands to motor driver.

Motors move the robot accordingly

Testing the Hardware :

MPU6050 Sensor Test

Read tilt values using Serial Monitor.

Verify change in values when sensor is tilted.

Motor Test

Test motors individually using motor driver.

Gesture Test

Tilt MPU6050 in different directions.

Observe corresponding robot movements.

Full System Test

Mount MPU6050 on glove or handheld board.

Control robot using hand gestures.

Applications :

Assistive robotics

Wheelchair control systems

Industrial robot control

Gaming and VR interfaces

Defense and rescue robots

Troubleshooting :

Problem | Possible Cause | Solution |

MPU6050 not detected | I2C wiring issue | Check SDA/SCL connections |

Robot moves incorrectly | Wrong gesture mapping | Adjust threshold values |

Robot not moving | Motor driver issue | Verify motor power supply |

Erratic movement | Sensor noise | Use filtering in code |

Arduino resets | Power drop | Use a separate motor battery |

( A Gesture Controlled Robot is an intelligent robotic system that moves based on human hand gestures instead of traditional control methods like buttons, joysticks, or remote controls. This system enables natural and intuitive interaction between humans and machines, making robot operation more flexible and user-friendly.

The project primarily uses the MPU6050, which combines an accelerometer and a gyroscope to detect hand movements in real time. The sensor captures motion data such as tilt, rotation, and orientation of the hand. These signals are then processed by a microcontroller (such as Arduino or ESP32) to interpret specific gestures like forward, backward, left, and right movements.

The processed data is transmitted wirelessly using communication modules such as Bluetooth (HC-05/HC-06) or RF modules to the robot unit. Based on the received commands, the robot’s motor driver controls the movement of DC motors or servo motors, allowing the robot to navigate accordingly.

This system is based on principles of motion sensing and inertial measurement, enabling accurate detection of human gestures without physical contact. It provides a highly responsive and interactive way to control robotic systems.

Gesture-controlled robots are widely used in robotics research, assistive healthcare systems (especially for physically disabled users), industrial automation, and military applications where hands-free control is essential. It can also be used in smart home systems and educational robotics for interactive learning.

With further enhancements, the system can include machine learning for gesture recognition, voice control integration, and real-time feedback systems, making it even more intelligent and efficient. )